The prevailing narrative surrounding generative media suggests a “push-button” reality where high-converting ad creative appears instantaneously. For the indie maker or the prompt-first creator, the actual experience is often more abrasive. While generating a single, visually striking image is now trivial, the difficulty lies in the production of a cohesive set—images, videos, and social assets that share the same DNA, lighting, and brand logic. This is where the friction lives.

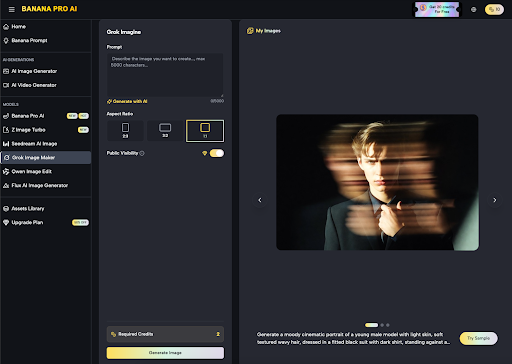

Moving from a single generation to a multi-channel campaign requires more than just a creative eye; it requires an operator’s mindset. You are no longer just “generating”; you are directing an inference engine to maintain visual continuity across varying aspect ratios and media formats. By leveraging the specific controls available in Banana Pro, creators can bridge the gap between a disorganized folder of “cool images” and a professional asset library ready for deployment.

The Consistency Problem in Modern Ad Creative

The primary hurdle in using AI for performance marketing is stylistic drift. If you generate a hero image for a landing page and then try to create three matching Instagram Story ads, a standard model will often shift the color temperature, the character’s facial features, or the texture of the background. This variance kills brand trust. Users notice when an ad looks “off” compared to the page they just landed on.

To combat this, professional workflows rely on a “seed and expand” strategy. You start with a high-fidelity baseline using Nano Banana Pro, which serves as the stylistic anchor. The goal isn’t to get the perfect ad in one shot, but to establish a visual logic that the rest of your tools can follow. This involves identifying specific descriptors—lighting types like “volumetric” or “flat minimalist”—and keeping those variables constant while changing the composition for different platforms.

Standardizing the Baseline with Nano Banana Pro

For most prompt-first creators, the initial generation is where the heavy lifting happens. Using Nano Banana Pro allows for a level of stylistic rigidity that general-purpose models often lack. When building a campaign for a new product, the operator should focus on the “environmental constants.”

Is the product set in a high-contrast urban environment or a soft-focus interior? Once these constants are locked in within Nano Banana Pro, the creator can then use the Image-to-Image functions to iterate. This is a critical distinction: instead of writing a new prompt for every asset, you are using the primary asset as a structural guide. This significantly reduces the time spent on “prompt engineering” and increases the time spent on actual design composition.

Operationalizing the AI Image Editor

Generating an image is step one. Refining it for a specific ad placement is step two. Most generative tools fail here because they lack a persistent canvas or a way to modify specific regions of an image without altering the whole. The AI Image Editor functionality within the broader ecosystem solves this by allowing for localized adjustments.

If you have a near-perfect visual for a LinkedIn ad but the background is too busy for overlaying text, you don’t re-generate the whole scene. You use the editor to simplify specific quadrants. This “Canvas Workflow” is what separates hobbyist use from production use. It allows you to move elements, adjust scales, and ensure that the “safe zones” for UI elements on platforms like TikTok or Instagram are respected.

Limitation Note: It is important to acknowledge that AI-generated text within images remains a point of significant friction. While the models are improving, attempting to generate complex typography directly into a campaign asset often results in “hallucinated” characters. A restrained operator will use Banana AI to generate the visual background and then use traditional design software or the platform’s native tools to overlay the actual copy. Relying on the AI for pixel-perfect brand fonts is currently a recipe for frustration.

Bridging Static and Motion Assets

A modern campaign isn’t complete without video. However, the jump from a static image to a 6-second motion graphic is where many workflows break down. The challenge is maintaining the specific aesthetic established by Nano Banana throughout the video generation process.

When moving from static to motion, the “Image to Video” workflow is the most reliable path. By feeding a high-quality static frame generated in Nano Banana Pro into the video engine, you provide the model with a fixed starting point. This reduces the temporal noise that often plagues AI video. Instead of the video “guessing” what the scene looks like, it calculates motion based on the existing pixels.

Even with this approach, consistency is not guaranteed. Creators must be prepared for “motion artifacts”—strange warping in fast-moving objects or background elements that shift shape. In an ad context, these glitches can be distracting. The professional response is to keep motion subtle. A slight camera pan or a gentle “breathing” effect on the background is often more effective and stable than trying to generate complex human movement, which frequently leads to an “uncanny valley” effect.

Landing Page Support and Asset Scaling

Landing pages require a different type of visual hierarchy than social ads. Where a social ad needs to be loud and disruptive, a landing page hero image needs to be clean and supportive of the value proposition.

When using Nano Banana to create these assets, the focus shifts to “negative space.” An image with too much detail will clash with your H1 headlines and CTA buttons. Operators should intentionally prompt for “uncomplicated backgrounds” or “shallow depth of field” to ensure the visual doesn’t compete with the site’s conversion elements.

Furthermore, the need for multiple sizes—desktop, tablet, and mobile—requires a tool that understands aspect ratio without stretching the subject. Using the canvas tools to “outpaint” or extend the borders of a 16:9 image to fit a 9:16 mobile frame is a high-value move. It allows you to use the same core creative across the entire funnel, reinforcing brand recognition every time the user sees the asset.

Expectation Management and Technical Realities

There is a temptation to believe that these tools eliminate the need for a designer. In reality, they transform the designer into a high-speed curator and editor. There are still several areas where the technology requires human intervention:

1. Hands and Fine Detail: Despite the advancements in Nano Banana Pro, complex human anatomy can still fail in certain poses. For high-stakes ad creative, expect to do a “pass” with a traditional editor to fix small anomalies.

2. Color Precision: If your brand requires a very specific hex code for its primary color, getting the AI to hit that exact shade via prompting is nearly impossible. Use the AI to get “close,” and then use a LUT (Look Up Table) or a color balance layer in post-production to bring it into brand alignment.

3. Logical Consistency: The AI doesn’t understand physics. It might place a shadow in a direction that contradicts the light source. While this might not matter for a quick social post, it can make a high-res landing page look amateurish.

Uncertainty Factor: As models continue to evolve, the “prompt” is becoming less important than the “input image.” We are entering an era of “Reference-Driven Design.” Creators who master the ability to feed the AI specific structural references will outpace those who rely solely on text descriptions. However, exactly how much control we will have over “Seed” consistency across different model versions remains an open technical question in the industry.

The Operator’s Conclusion

The shift from manual creation to AI-assisted production isn’t about working less; it’s about producing more at a higher frequency. For an indie maker, the ability to generate a cohesive ad set—complete with variations for A/B testing—without a five-figure creative budget is a massive leverage point.

By using Nano Banana as the stylistic engine and the tools within Banana Pro to refine and extend those visuals, you move away from the “one-off” generation trap. You begin to build a repeatable system where the AI handles the bulk of the visual synthesis, while you handle the strategic placement and final polish.

In this workflow, the “High-Friction” parts are actually the most valuable. The time you spend correcting the AI, masking out errors, and ensuring that your video matches your static hero image is where the quality is baked in. It is the human-in-the-loop that turns a generic AI output into a professional campaign asset. Success in this space requires a sober understanding of the tool’s capabilities and a disciplined approach to post-processing, ensuring that every asset, from the smallest social tile to the largest hero banner, feels like it belongs to the same story.